I’m going to say something most HR guides won’t: the annual review process was already broken before AI entered the picture.

Most managers are reconstructing 12 months of performance from memory, on a deadline, while managing a full workload. The result is reviews that are vague, inconsistent, and nearly useless for actual employee development.

AI for performance reviews changes this. Not by replacing the manager’s judgment, but by eliminating the parts of the process that were eating time without adding value: data aggregation, draft generation, bias detection, scheduling, and 360-degree feedback analysis.

Today, the question is no longer whether to use AI in performance management. It’s how to use it without losing the human judgment that makes reviews meaningful.

Who This Is For

- HR managers at growth-stage companies building or fixing their review cycles

- People operations leads replacing spreadsheets with performance management software

- Team leads managing employee evaluations at scale for the first time

- Talent development managers connecting review outcomes to development plans

- Founders and operations managers without a dedicated HR team who still need a structured process

What Are AI Performance Reviews and How Do They Work in Modern HR Systems?

Here is what that looks like in practice:

- Data synthesis: AI pulls together a full review period of check-in notes, OKR progress, and feedback submissions into a structured manager brief before a word is written.

- Draft generation: Managers start with an editable document, not a blank page, using a structured input like the SBI framework.

- AI bias detection in reviews: Gendered language, vague qualifiers, and inconsistent phrasing get flagged before drafts go out.

- 360-degree feedback analysis: AI reads across all rater responses and surfaces recurring themes, outliers, and gaps between self-assessment and peer perception.

- Logistics automation: Review cycles run on schedule with automated reminders, completion tracking, and escalation for overdue submissions.

Note: What AI does not do, and this matters just as much, is accurately evaluate someone it doesn’t know. It cannot replace judgment in nuanced situations, such as performance during a restructuring, personal context, or interpersonal dynamics. And it cannot be held accountable. Every AI-generated performance review that reaches an employee needs a human name behind it.

Why Should HR Teams Use AI Tools for Writing Performance Evaluations?

I’ll be direct about what we are actually solving here. The annual review is not broken because managers don’t care. It’s broken because the process design sets them up to fail.

- Recency bias: Without structured data from the full review period, managers unconsciously weight the last 30 to 60 days over everything before it. One strong sprint rescues a mediocre year. One rough patch undoes nine good months. AI solves this specifically because it surfaces the full documented record, not what the manager happens to remember.

- The blank page problem: A survey by NASFAA in 2025 found that 91% of respondents said the time and resources required for administrative processes had increased over the past five years, with many citing compliance and reporting workloads as major operational challenges.

Writing eight detailed, individualized employee evaluations is 8 to 12 hours of writing time on top of a full workload. AI writing assistance for HR cuts this to a fraction.

- Scattered data: Performance information lives across Slack messages, one-on-one notes, project tools, email threads, and imperfect memory. There is no single place to look when review season arrives. Embedded AI fixes this at the source.

- Inconsistency at scale: Without a shared framework, reviews across a management team vary wildly. Some are three paragraphs. Some are three sentences. Employees compare notes, and the inconsistency destroys confidence in the entire manager feedback process.

The organizations I see get this right are the ones who treat AI as infrastructure for the review process, not a shortcut through it. The goal is not to write reviews faster. It’s to write reviews that are more accurate, more consistent, and more useful for actual employee development.

When Should Managers Rely on AI During the Performance Review Process?

Not at every stage. The manager feedback process has distinct phases and AI earns its place at specific points. Here is the full implementation framework.

AI Review Implementation Checklist

| Phase | AI Responsibility | Human Responsibility |

| Data Collection | Aggregating check-in notes, OKR hits, Slack kudos, goal progress | Verifying context of missed goals and unusual periods |

| Drafting | Converting SBI notes to structured prose | Adjusting tone for individual relationships and sensitivities |

| Bias Review | Flagging gendered or inconsistent language across drafts | Final content review and sign-off |

| 360 Analysis | Surfacing themes and gaps across multi-rater feedback | Interpreting context and deciding what to address in conversation |

| Development Planning | Suggesting focus areas and learning activities from review outcomes | Validating suggestions against the employee’s actual goals |

| Logistics | Scheduling, reminders, completion tracking, escalation | Handling exceptions and conversations the system cannot automate |

Stage 1: Pre-Review Data Aggregation

This is where AI saves the most time. Before I write a word of any review, I want the full picture of what actually happened during the review period, not what I remember.

Use this prompt when your platform has access to employee records: “Summarize [Employee Name]’s goal progress, check-in notes, and feedback from the past review period. Organize into: key accomplishments with evidence, development areas with specific examples, and open questions I should address in the review conversation. Do not infer anything not present in the documented data.”

The last sentence is the most important one. You want organization, not invention.

Stage 2: Drafting the Review

AI writing assistance for HR works best with a structured input framework. SBI (Situation, Behavior, Impact) produces the most accurate and defensible drafts.

Manager prompt using SBI: “Using these notes [paste notes], draft performance review feedback that follows the Situation-Behavior-Impact format. Keep tone constructive and specific. Do not use generic phrases. If you lack enough detail to make a specific claim, leave a placeholder rather than guessing.”

The placeholder instruction matters. Generic output is the most consistent complaint from managers who try AI-assisted reviews without this constraint. Instruct the AI to flag what it doesn’t know rather than fill it in.

Stage 3: 360-Degree Feedback Analysis

Manually reading and synthesizing feedback from 8 to 10 raters per employee is one of the most time-consuming parts of any review cycle. AI analysis here changes what’s operationally possible.

Ask AI to surface from your 360 data: “Across all rater responses for [Employee Name], identify: (1) competencies where ratings from all groups align, (2) areas where self-rating diverges significantly from peer or manager ratings, and (3) feedback that is too vague to act on. Flag these separately rather than averaging them.”

Stage 4: Employee Self-Evaluations

Most guides on AI for performance reviews focus entirely on the manager. That’s a gap worth fixing because self-evaluation is genuinely hard. Articulating impact without overselling or underselling because self-promotion feels uncomfortable is a specific writing skill most employees haven’t practiced.

Self-evaluation prompt for employees: “Here is a bulleted list of my contributions from the past year [paste list]. Turn these into a self-evaluation narrative that highlights patterns of impact and growth. Use outcome-oriented language throughout. Do not exaggerate or invent. If anything is unclear, ask a clarifying question rather than assuming.”

NLP tip: Replace activity language with outcome language. “Managed the onboarding process” becomes “Reduced onboarding time by three weeks by standardizing the new hire workflow.” The second is specific, verifiable, and lands differently in a calibration meeting.

Stage 5: Development Plan Drafting

Most managers write the review, have the conversation, and then do nothing structured with the outcomes. AI can build the bridge between the review and hyper-personalized development paths.

Post-review development planning prompt: “Based on this completed performance review [paste], suggest three specific development focus areas for the next six months. For each, suggest one observable behavior change and one type of learning activity. Keep suggestions realistic for someone in [role/level].”

This shifts the manager’s role from clerk to coach. The review becomes the input to a development conversation, not the end of the process.

Where Does AI Add the Most Value in the Performance Review Workflow?

I get asked this a lot, and the honest answer is: in the places managers hate most and do worst under time pressure.

Highest-value applications:

- Pre-review data synthesis, where a year of scattered information gets organized before a word is written

- 360-degree feedback analysis, where AI reads across all raters and surfaces themes a human would miss under deadline pressure

- Language consistency review, where AI bias detection in reviews catches gendered phrasing and vague qualifiers across dozens of drafts before they go out

- Scheduling and reminder automation, where review cycles run on time without HR manually chasing completions

- Post-review development planning, connecting review outcomes to actual next steps rather than filing them in a folder

Lower-value, higher-risk applications:

- Writing full reviews from scratch without real employee data. This is where hallucinations happen and where trust gets destroyed.

- Making promotion or compensation recommendations without a documented human decision trail.

- Replacing the review conversation. Nothing does this.

What Are the Risks of Using AI in Employee Evaluations?

Let me be straight with you. There are real risks here, and the way to handle them is not to avoid AI. It’s to know exactly what you’re dealing with and have a fix ready before it becomes a problem.

Risk-Mitigation Matrix

| Risk | What Actually Happens | The Fix |

| Hallucination | AI invents accomplishments or details not in your data | Use platforms with RAG (Retrieval-Augmented Generation) that force AI to draw only from actual employee files. Verify every specific claim before the review goes out. |

| Bias Amplification | AI reproduces historical rating patterns, including bias already in your records | Enable AI bias detection in reviews at the platform level. Periodically audit rating distributions across demographic groups, not just individual review language. |

| Data Privacy | Employee PII entered into a public AI tool may be used for model training | Use enterprise-hosted AI tools, not consumer-grade ones. Check your organization’s data policy before using any public LLM with employee records. |

| Undisclosed Use | Employees recognize AI-generated content and lose trust in the manager and process | Disclose that AI assisted with drafting and that you reviewed and own the final content. The transparency lands fine. The deception does not. |

| Nominal Oversight | Managers skim and approve AI drafts without actually reading them | Define what “review and approval” requires operationally. A rubber stamp is not oversight and it will not protect the organization legally. |

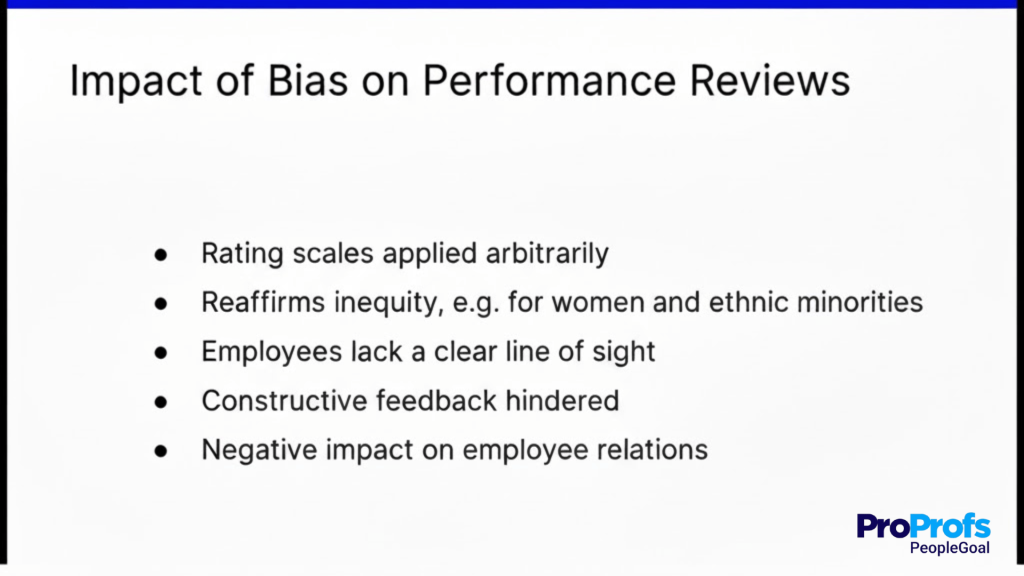

How Can Companies Prevent Bias in AI-Generated Performance Reviews?

I want to go deeper than “use bias detection tools” here because that answer misses most of the actual problem.

AI bias detection in reviews catches language-level issues: gendered phrasing, inconsistent adjective use, vague qualifiers. It does not catch structural bias.

If your organization has historically rated certain groups lower on leadership potential or visibility, the AI learns from that history. Detection features show you the symptoms. They do not fix the cause.

A practical approach that actually works:

Step 1: Audit your historical rating data before deploying AI. Run a basic distribution analysis across gender, tenure, department, and role level. If patterns exist in your records, know about them before you train or deploy any AI model on top of them.

Step 2: Use structured input frameworks. SBI, competency-based rubrics, and documented goal data give AI less room to infer. Unstructured prompts produce unstructured output, and unstructured output carries the highest bias risk.

Step 3: Enable AI bias detection features at the platform level so they run on every draft before the review cycle closes, not after a complaint.

Step 4: Review distributions periodically, not just individual reviews. One biased review is an error. A pattern is a systemic problem that individual review checks will never catch.

Step 5: Keep humans accountable in a real and documented way. The most important safeguard is the simplest: every review that goes to an employee has a human who reads it, edits it, and can defend it in conversation. Ethical AI in HR is built on the accountability being genuine.

Which AI Tools Are Best for Employee Performance Management?

The best AI tools for performance reviews are not HR platforms with built-in AI. They are general-purpose AI assistants that managers already use, and the difference is in how you feed them data and what you ask them to do.

Before getting to the tools, one quick thing that actually matters: the AI is only as good as the data you give it. If you paste in scattered notes from memory, you get a generic review.

If you bring in structured data like goal progress, check-in summaries, and feedback from multiple raters, you get something useful.

That is why teams using PeopleGoal get more out of these tools. All the relevant employee data is already organized in one place, so what goes into the AI prompt is accurate, consistent, and complete.

The AI Tools Worth Using:

1. ChatGPT (GPT-4o)

ChatGPT is the most versatile option I have seen for writing and refining performance reviews. Give it a structured prompt with goal outcomes, key behaviors, and project contributions, and it produces well-written, calibrated narratives in seconds. It handles tone adjustments, bias checks, and length calibration well. Best for: drafting review narratives and flagging vague or biased language before submission.

2. Claude (Anthropic)

Claude excels at nuanced, longer-form writing and is particularly good at maintaining consistency across multiple reviews in a single session. I find it especially useful when reviewing large teams because it keeps language calibrated across different employees without drifting in tone. Best for: bulk review drafting and consistency checks across a team.

3. Gemini (Google)

Gemini integrates naturally into Google Workspace, which makes it practical for teams already running review processes in Docs or Sheets. It can summarize feedback comments, draft review language, and flag gaps directly inside existing documents. Best for: teams with Google Workspace-based workflows.

4. Microsoft Copilot

Copilot serves the same function for Microsoft 365 environments. If your review templates live in Word or your check-in notes are in Teams, Copilot can pull context from those documents and draft review content without switching tools. Best for: organizations running HR processes inside Microsoft 365.

5. Notion AI

Notion AI is useful for teams that track goals, OKRs, and check-in notes inside Notion. It can summarize a quarter’s worth of entries, identify themes, and produce a structured review draft from existing workspace content. Best for: startups and mid-size teams using Notion as their operating system.

The consistent pattern I see across all of them is that output quality is a direct function of input quality.

When structured performance data exists, with clearly documented goals, regular check-in records, and multi-rater feedback, these tools produce reviews that are accurate and defensible. When managers paste in last-minute recollections, the output reflects that.

That is the role PeopleGoal plays in this stack. It is not an AI review tool. It is the system that makes your employee data structured and accessible so that when you do use any of the tools above, the inputs are worth something.

Build More Accurate and Meaningful Performance Reviews With AI

AI for performance reviews is not going to fix a broken performance review process on its own. What it can do is remove the administrative work that keeps managers from giving thoughtful, consistent, and development-focused feedback in the first place. The biggest takeaway is this: AI works best when it has access to structured, year-round employee data instead of rushed end-of-year notes.

That is why the foundation matters. Platforms like PeopleGoal help teams centralize goals, check-ins, 360-degree feedback, review cycles, and reporting in one system, making it easier for managers and AI tools to work from the same reliable source of truth. The result is reviews that are more accurate, fair, and actionable.

If you are exploring AI-generated performance reviews, start by evaluating your current review workflow, data quality, and manager experience before choosing the technology layer.

Frequently Asked Questions

How can AI improve the accuracy of employee performance evaluations?

Performance review AI improves accuracy by synthesizing documented goal progress, check-in notes, and feedback from the full review period instead of relying on memory. It also flags vague or inconsistent language before reviews go out. The core condition: the AI needs access to structured, documented records, not pasted summaries.

Why should HR teams use AI tools for writing performance reviews?

Because the administrative burden of manual reviews directly degrades their quality. When managers spend hours reconstructing a year from memory, the review becomes a documentation exercise. AI handles the preparation so managers can focus on what actually matters: the development conversation.

What is an AI performance review generator?

An AI performance review generator is a tool that produces draft employee evaluations from structured input: goal data, check-in notes, SBI-formatted feedback, or uploaded performance records. Quality depends entirely on what data you feed it. Generators working from documented records produce accurate drafts. Generators working from pasted notes produce generic ones.

What are the biggest risks of using AI in employee evaluations?

The five main risks are hallucination (AI inventing details), bias amplification (reproducing historical patterns), data privacy violations from using public tools with employee PII, damaged employee trust from undisclosed AI use, and nominal oversight where managers approve without reading. All five are manageable with the right platform and process.

Who is responsible for reviewing and approving AI-generated performance feedback?

The manager is fully responsible. Every AI generated performance review that reaches an employee needs a human who has read it, verified its accuracy, edited it where needed, and is prepared to discuss every point in the follow-up conversation. "The AI wrote it" is not a defense legally or in the room with the employee.

Why is human oversight necessary in AI-supported performance reviews?

Because AI cannot be held accountable, read a room, or respond to what an employee says in a review conversation. Human-in-the-loop (HITL) oversight is also a legal requirement in most employment contexts in 2026. The EU AI Act and US EEOC guidance both make clear that employers own the outcome of AI-assisted decisions regardless of the tool used.

How can companies prevent bias in AI-generated reviews?

Audit historical rating data before deployment, use structured input frameworks like SBI, enable AI bias detection at the platform level, review rating distributions across demographic groups periodically, and ensure human oversight is real rather than nominal. Language detection catches surface issues. Systemic bias requires human analysis of patterns.

What is the difference between embedded AI and standalone AI for performance reviews?

Embedded AI lives inside your performance management software and draws from actual employee records: goal progress, check-ins, and feedback history. Standalone tools like ChatGPT work from whatever you paste in and have no organizational context. Embedded AI produces significantly more accurate output and eliminates most hallucination risk.

When should managers rely on AI during the performance review process?

AI is most valuable at pre-review data synthesis, structured draft generation, 360-degree feedback analysis, bias language review, and post-review development planning. It should not be used to make final assessments independently, produce unverified claims about specific employees, or substitute for the actual review conversation.

Ready to 3x Your Teams' Performance?

Use the best performance management software to align goals, track progress, and boost employee engagement.