Most teams don’t fail at performance reviews because they chose the wrong type. They fail because they chose a type that doesn’t match how their work actually gets seen, documented, and decided on.

If your last review cycle felt unfair, subjective, or just pointless, the problem wasn’t effort. It was structure. The right evaluation type applied to the wrong situation produces bad data and frustrated people.

This guide helps you match your approach to your actual situation and shows you the bias traps that quietly corrupt even well-designed systems.

Why Your Last Review Cycle Felt Wrong

Before choosing an evaluation type, it’s worth noting why most review processes break down. Bias is almost always the culprit and it doesn’t announce itself.

| Bias | What Actually Happens | How to Prevent It |

|---|---|---|

| Recency Bias | Recent wins or mistakes overshadow the full cycle | Require monthly or quarterly documented check-ins; reference those records during final reviews |

| Centrality Bias | Managers rate most people as "average" to avoid hard conversations | Run cross-team calibration sessions where every rating requires a specific example |

| Anchoring Effect | Managers unconsciously adjust their scores after reading self-assessments | Have managers submit independent ratings before viewing self-evaluations |

| Dunning-Kruger Gap | Weaker performers overrate themselves; strong ones underrate | Use scoring rubrics with defined behavioral examples at each level |

| Social Indifference | Peers give vague, positive feedback to protect relationships | Use anonymous, behavior-based forms with structured questions |

| Metric Gaming | Employees hit measurable targets while ignoring actual impact | Pair quantitative goals with qualitative manager criteria |

| Visibility Bias | Employees closer to leadership get better ratings regardless of output | Calibrate ratings across teams; use peer input to surface hidden contributions |

| Annual Distortion | Once-a-year reviews rely on memory, which is unreliable | Shift to continuous documentation and lighter quarterly cycles |

None of these disappear with a better template. They disappear when your evaluation structure is matched to how work actually happens in your organization.

What Are the Different Types of Performance Evaluations? (+How to Choose)

Rather than listing every evaluation type that exists, here’s a more useful framework: most organizations fall into one of four situations. Each calls for a different primary approach. While there are many different types of performance evaluations, the key is selecting the one that fits how work is actually observed and measured inside your organization.

Situation 1: One Manager Sees Most of the Work

Recommended approach: Manager-led reviews with documented check-ins

This is the most common starting point for teams of 25 to 150 people. A direct manager has enough visibility to assess results, behaviors, and ownership. The risk is subjectivity when documentation doesn’t happen throughout the year.

Many organizations begin here when exploring the types of employee performance evaluation that can realistically work for their team size and management structure.

What makes it work:

- Regular one-on-ones with written notes

- Goals agreed upon at the start of the cycle

- A rating scale where every level has a concrete behavioral definition

Without those three things, the annual review becomes a memory exercise and the employee feels judged rather than assessed.

Also add: Self-evaluation as a companion. Before the manager review, have the employee document their wins, misses, and what they learned. This surfaces invisible effort, reduces surprises, and shifts the conversation from verdict to dialogue.

Best fit for: Small and mid-sized teams building a formal process for the first time, roles with clear deliverables, and decisions tied to compensation or promotion.

Situation 2: Work Happens Across Teams or Is Invisible to One Manager

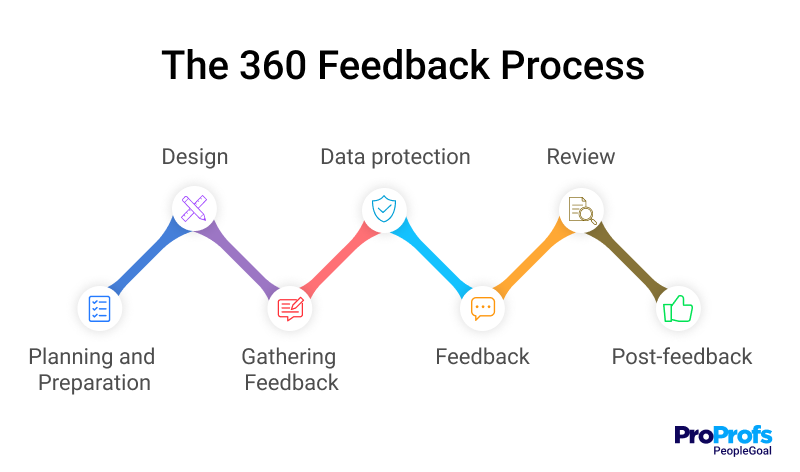

Recommended approach: Peer evaluation or 360-degree feedback

If a manager can only see 40% of someone’s actual contribution because the work is cross-functional, client-facing, or collaborative, a manager-only review produces a 40% picture. Peer input fills the gap.

Peer evaluation works best when questions are behavioral rather than general:

| Instead of this | Use this |

|---|---|

| "Is this person a team player?" | "How does this person handle conflicting priorities?" |

| "Does this person communicate well?" | "Describe a time this person clarified a confusing situation for the team." |

Psychological safety matters here. If people don’t feel safe giving honest feedback, you’ll get uniformly positive responses that tell you nothing.

360-degree feedback expands this further by adding direct reports, stakeholders, and sometimes clients. It’s most valuable for managers and senior leaders. One important rule: do not use it for compensation decisions. The data is too variable across raters. Reserve it for development conversations only.

PeopleGoal automates multi-rater feedback collection, anonymizes responses, and aggregates patterns across raters, which is where the actual insight lives.

Best fit for: Cross-functional teams, remote or hybrid environments, leadership development programs, and any role where a single manager doesn’t have full visibility.

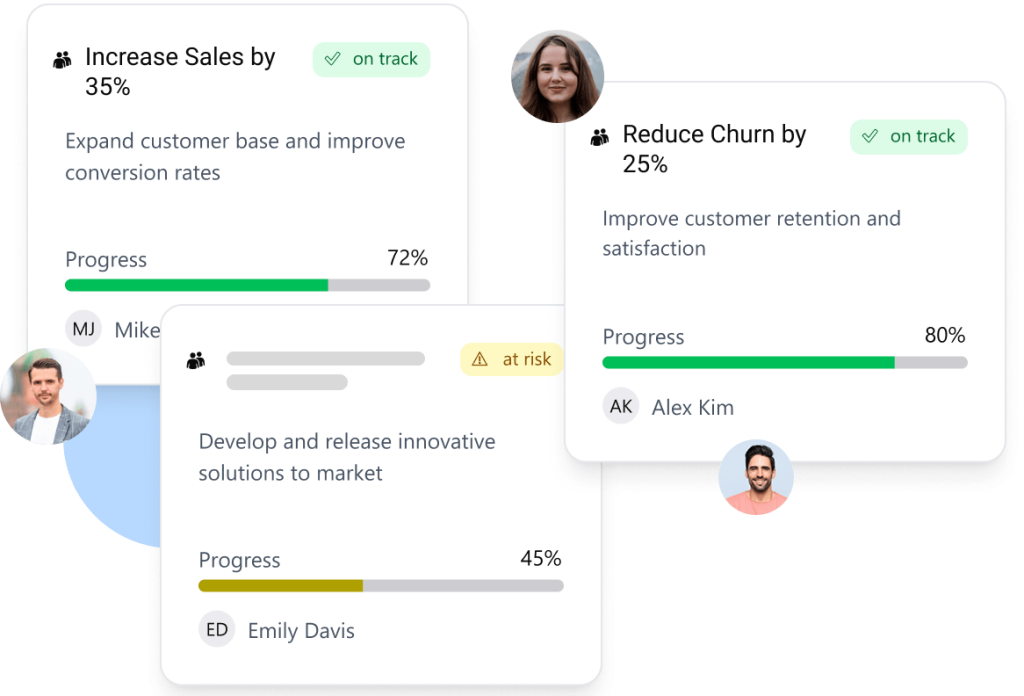

Situation 3: Results Are Measurable and Tied to Business Targets

Recommended approach: Goal-based evaluation with OKRs or SMART goals

When work is measurable, anchoring reviews in agreed-upon objectives removes most of the subjectivity. The conversation shifts from “how do I feel about your performance” to “here’s what we agreed on, here’s what happened.”

Choosing between SMART goals and OKRs:

| SMART Goals | OKRs |

|---|---|

| Individual-level accountability | Team and cross-department alignment |

| Specific, time-bound, practical | Ambitious objective with measurable key results |

| Best for role clarity and short-term targets | Best when multiple people contribute to one outcome |

Watch out for metric gaming. A sales rep closing low-quality deals to hit volume targets is a metric gaming problem, not a performance success. Prevent it by pairing every quantitative goal with at least one qualitative manager criterion.

PeopleGoal keeps goal progress visible throughout the cycle rather than only at review time, which prevents the end-of-year scramble and gives managers accurate data for rating decisions.

Best fit for: Sales, marketing, product, and any role where output is directly measurable and tied to company targets.

Situation 4: You Need Consistency Across a Growing or Dispersed Team

Recommended approach: Competency-based evaluation with calibration

When multiple managers evaluate people in similar roles, “exceeds expectations” can mean something different on every team. Competency frameworks fix this by defining behavioral standards for each role level, making clear what “leads effectively” or “communicates clearly” actually looks like in practice.

This also becomes the right foundation for promotions. Instead of asking “is this person ready?” based on gut feel, you’re asking “does this person consistently demonstrate the behaviors we’ve defined for the next level?”

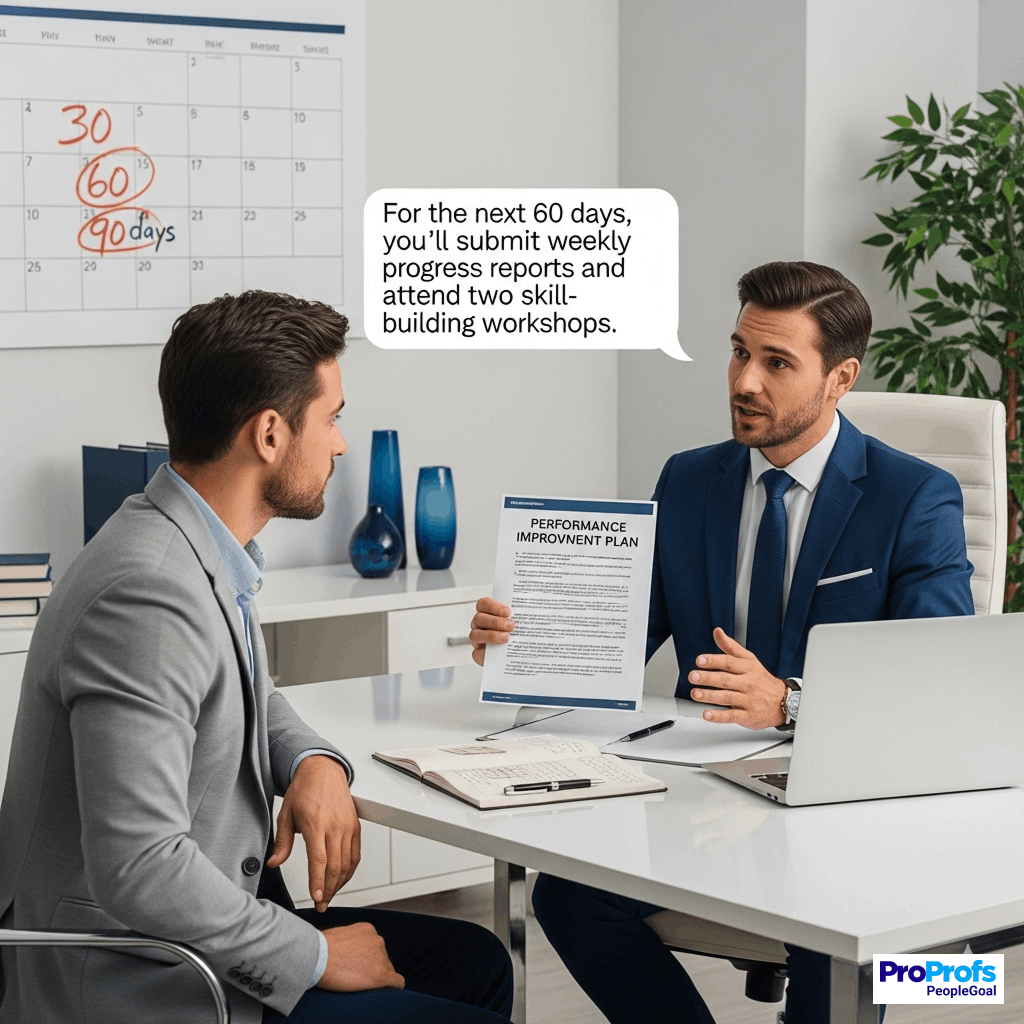

Three things that make it work:

- Defined behaviors at each role level, written in observable terms

- Calibration sessions where managers compare ratings and reconcile differences with evidence. Without this step, the framework becomes decoration.

- Probationary check-ins for new hires at 30, 60, and 90 days, with documentation recorded in real time rather than reconstructed at the six-month mark

Best fit for: Organizations with multiple managers evaluating similar roles, companies formalizing performance evaluation processes for the first time, and any situation where promotion decisions need to hold up to scrutiny.

The Full Evaluation Type Reference

If your situation involves a combination of these, the table below shows how each evaluation type behaves so you can layer them without creating conflicts. It also helps clarify how the most common types of performance evaluation methods differ in focus, bias risk, and practical use.

| Evaluation Type | What It Focuses On | Common Bias Risk | Works Well When | Breaks Down When | Best Use Case |

|---|---|---|---|---|---|

| Manager Evaluation | Direct accountability, results, ownership | Recency bias, power dynamics | Documentation exists throughout the year | Manager has limited visibility into actual work | Compensation and promotion decisions |

| Self-Evaluation | Reflection, ownership, invisible effort | Over/under-rating | Criteria are clearly defined | Criteria are vague or shifting | Development conversations |

| Peer Evaluation | Collaboration and day-to-day behavior | Social pressure, halo effect | Psychological safety is high | People fear retaliation or conflict | Cross-functional and team-based environments |

| 360-Degree Feedback | Leadership influence and patterns | Conflicting input, rater fatigue | Used for development, not pay | Tied to compensation decisions | Leadership development |

| Upward Assessment | Manager effectiveness | Fear of retaliation | Anonymity is genuinely protected | Responses can be traced back | Trust and leadership health |

| Team-Based Evaluation | Collective outcomes | Masking individual gaps | Individual contributions are tracked separately | Accountability isn't clear | Project-driven and interdependent roles |

| Skill-Based Evaluation | Technical capability, compliance | Narrow task focus | Tied to progression and pay bands | Not connected to growth opportunities | Technical, operational, and safety roles |

| Competency-Based Evaluation | Behavioral standards | Subjective interpretation | Behaviors are clearly defined and observable | Behaviors are vague or aspirational | Promotions and leadership tracks |

| Goal-Based (OKRs/SMART) | Measurable objectives | Metric gaming | Goals are set collaboratively, not top-down | Focus on numbers crowds out judgment | Results-driven roles |

| Cascading Goal Evaluation | Strategic alignment | Top-down miscommunication | Goals are clearly communicated at every level | Leadership goals are vague or shift mid-cycle | Enterprise alignment |

| Probationary Evaluation | Early-stage performance fit | Limited observation window | Check-ins are documented in real time | Feedback is saved for a single end-of-probation conversation | First 90 days |

| Promotion & Succession | Future readiness | Visibility bias | Multiple leaders calibrate together | Decisions rest on one manager's view | Talent planning |

| High-Potential Assessment | Long-term leadership capacity | Political influence | Backed by behavioral evidence | Based on perception or proximity to leadership | Executive pipeline |

| Engagement-Linked Evaluation | Sentiment and retention signals | Overcorrecting for morale | Used as a development signal, not a score | Tied to compensation | Retention-sensitive and hybrid teams |

Which Performance Rating Scale Should Managers Use?

The evaluation type determines what you measure. The rating scale determines how consistently you measure it across managers. A mismatch here is where most calibration problems start, which is why organizations often combine several types of performance evaluation systems to balance structure, fairness, and practical usability.

1. 5-point scale: The most common and works well for compensation and promotion decisions where you need precision. The problem is that managers interpret the middle point differently unless you define what “3” looks like in specific behaviors and results, not just “meets expectations.”

2. 3-point scale: It reduces rating debates and speeds up cycles. It’s a good fit for growing teams looking to reduce admin load. The tradeoff is lower precision, which frustrates leaders during pay discussions unless written evidence accompanies every rating.

3. Behaviorally Anchored Rating Scales (BARS): Tie each level to specific observable examples. This is the strongest approach for competency-based evaluation because it removes subjective interpretation. It takes more setup, but it dramatically improves consistency across managers.

4. Score plus narrative: It is what the best systems actually do in practice. The number gives you a summary. The written examples tell you why and make the review defensible when challenged.

One practical rule: if ratings influence pay, every level needs a behavioral definition with examples. If the review is primarily for development, simplify the scale and invest in the written narrative instead.

How Should Performance Evaluations Change by Industry?

The right mix for a 40-person tech startup is different from a 400-person logistics company. Here’s what to adjust based on your environment.

1. Technology teams

They tend to over-index on output metrics like ticket count, velocity, and story points, while ignoring mentorship, architecture decisions, and long-term system thinking. Combine OKRs with peer feedback to capture both. Add quarterly qualitative reviews alongside the quantitative data.

Where to start: Set quarterly OKRs for each engineer and add one peer feedback question per cycle before building anything else.

2. Manufacturing and operations

This team faces the opposite risk: reducing performance to compliance checklists while ignoring process improvement and teamwork contributions. Anchor reviews in skill certifications and safety records, but layer in team-based evaluation for shift coordination and document improvement suggestions as performance inputs.

Where to start: Begin with monthly supervisor check-ins and a safety-based skill audit before adding anything else.

3. Healthcare

The healthcare industry requires balancing clinical accuracy with teamwork under pressure. Skill audits and manager evaluations cover the technical side. Structured peer reviews with behavioral prompts, not open-ended questions, surface the teamwork dimension without generating inconsistent data.

Where to start: Run a skill audit against defined clinical standards first, then add structured peer review once managers are comfortable with the documentation process.

4. Construction

This industry deals with team output overshadowing individual accountability on large sites. Skill-based criteria for safety compliance, team-based scoring for project milestones, and documented supervisor check-ins per project phase give you both dimensions.

Where to start: Introduce per-phase supervisor check-ins tied to safety compliance before adding team-based scoring.

5. Retail

Over-relies on sales numbers. Customer experience and team morale affect long-term performance as much as monthly revenue. Pair sales targets with engagement data, include upward feedback on store leadership, and run monthly lightweight check-ins rather than relying on annual reviews.

Where to start: Replace the annual review with monthly check-ins and add one customer satisfaction metric alongside revenue targets

6. Consulting and professional services

Struggle with visibility bias, where people closest to leadership get recognized while critical backend contributors get overlooked. Project-based evaluations at engagement close, 360 input for senior consultants, and calibration across practice areas correct for this.

Where to start: Introduce a short post-project evaluation at every engagement close before building out a full 360 process.

What Questions Help You Choose an Evaluation Method?

Before building anything, answer these:

1. Who actually sees this person’s work?

If the answer is “primarily their direct manager,” start with manager-led reviews and self-evaluation. For “multiple teams and stakeholders,” you need peer or 360 input.

2. What decision does this evaluation need to support?

Compensation and promotion decisions need documented evidence and calibrated ratings. Development conversations need narrative depth and behavioral specifics. Using the same system for both usually serves neither well.

3. Do you have documentation from throughout the year, or are you reconstructing it now?

If you’re reconstructing, your first priority isn’t choosing an evaluation type. It’s building a check-in cadence so the next cycle has real data to work from.

Once you have clear answers, the right evaluation approach becomes obvious. The goal isn’t to implement everything. It’s to start with the approach that matches your current situation, run it cleanly, and add complexity only when you’ve outgrown what you have.

Turn Evaluations Into Real Growth Conversations

When the structure matches the situation, reviews stop being a source of friction and start producing the data that actually supports a promotion conversation or a development plan. Managers stop relying on memory. Employees stop feeling blindsided. And decisions about who is ready for the next level become defensible rather than political. That’s the shift.

Getting there doesn’t require rebuilding everything at once. It requires matching your evaluation type to how work actually happens, building documentation habits throughout the year, and running calibration so ratings mean the same thing across every manager and team. Doing that manually with spreadsheets quickly becomes the bottleneck.

PeopleGoal is built for teams making exactly this transition, from disconnected processes to structured, automated evaluation cycles that handle goal-setting, 360-degree feedback, check-ins, and calibration in one place. Pilot it with one team, refine the structure, and scale with confidence.

Frequently Asked Questions

How do you reduce bias in performance reviews?

Reduce performance review bias by documenting performance continuously, asking managers to submit ratings before reading self-assessments, running cross-team calibration sessions that require examples for ratings, and using structured, behavior-based questions instead of relying on open-ended impressions.

What rating scale should we use?

A 5-point scale works well when reviews influence compensation, but only if each level has clear behavioral examples. A 3-point scale is simpler for new processes, while Behaviorally Anchored Rating Scales offer the most consistency but require more setup.

What’s the best evaluation type for a small or first-time process?

Start with a manager evaluation combined with a self-evaluation. This approach is easy to implement, provides useful insight into performance, and keeps administration manageable. Once the process stabilizes, you can add peer feedback or goal-based evaluation.

We already use an HRIS. Do we need a separate performance tool?

Most HRIS platforms support basic review forms but lack deeper capabilities like goal tracking, multi-rater feedback, cross-team calibration, and performance reporting. If your team relies on manual workarounds for these tasks, a dedicated performance tool can improve the process.

How do we get managers to actually use the review process consistently?

Adoption improves when the process is simple and structured. Keep check-ins short, automate reminders for each stage, and show managers clear examples of completed reviews. Calibration sessions also help because they create shared accountability among managers.

What’s the difference between a performance appraisal and a performance evaluation?

A performance appraisal usually refers to formal, score-driven reviews connected to compensation decisions. A performance evaluation is broader and includes feedback discussions, development planning, and goal setting. Effective systems combine both to support fair decisions and employee growth.

Ready to 3x Your Teams' Performance?

Use the best performance management software to align goals, track progress, and boost employee engagement.