If performance reviews make your managers break into a light sweat (or a heavy sigh), you’re not imagining it. The evaluation phase is where good intentions collide with messy reality: half-remembered wins, one recent mistake that suddenly feels huge, and a rating conversation that can either energize an employee… or quietly poison motivation for months.

The fix isn’t “do more reviews.” It’s tightening the performance evaluation process so the final stretch is fair, evidence-based, and actually useful.

Here’s a detailed guide where you will get a better idea of:

- A practical, step-by-step evaluation workflow HR can run consistently

- Bias traps that quietly distort ratings (and what to do about them)

- 2026-ready trends like AI summaries, skills tracking, and dynamic check-ins

Let’s start with what the evaluation starts with.

Performance Evaluation Process: What it Means

“If you want it to happen, measure it. If you want it repeated, recognize it.”

— H. J. Chammas

Award-winning Author,

The Employee Millionaire

Performance evaluation is often treated like the paperwork at the end of a flight: necessary, dull, and best completed quickly. But the evaluation phase is not a formality. It’s the summary period when the organization reviews performance against the established standards and assigns an official outcome (often an employee rating of record).

That rating can affect compensation, promotion eligibility, access to stretch work, and even who gets mentored. But it also does something quieter: it tells employees what your company really values.

If your competency model says “collaboration” matters, but every top rating goes to lone wolves who deliver fast, then congratulations. You’ve taught the culture a lesson. If your values say “customer obsession,” but customer feedback never shows up in evaluations, then that value is decorative.

Now, let’s move forward to understanding the exact performance evaluation process, step by step.

The Standard 7-Step Performance Evaluation Process/Workflow

A strong performance evaluation procedure is boring in the best way: consistent, predictable, and hard to derail. However, if you use strong performance evaluation tools, you can get ready-made evaluation templates that make the process easier and more interesting.

Here are the 7 steps of the performance evaluation workflow that HR can operationalize across teams, roles, and locations.

| 1 | Stage of the Performance Evaluation Process | What This Step Ensures |

|---|---|---|

| 2 | Confirm the performance plan and standards | Employees are evaluated only against goals and competencies that were clearly defined and documented in advance. |

| 3 | Employee self-evaluation | Employees reflect on outcomes, behaviors, constraints, and learning, creating ownership and additional context. |

| 4 | Manager evidence review | Ratings are based on documented performance data, feedback, and outcomes, not memory or recent events. |

| 5 | Secondary or HR review | Inconsistencies, bias risks, and documentation gaps are identified before the evaluation is finalized. |

| 6 | Performance review conversation | Written evaluations are translated into clear, developmental conversations with aligned expectations. |

| 7 | Employee acknowledgment | Employees understand the outcome, their rights, and the process for response or clarification. |

| 8 | Close and document the cycle | Evaluation data feeds into calibration, workforce planning, and the next performance cycle. |

Now, let’s check out these steps in a bit detail:

Step 1: Start With A Clean Performance Plan In Your System So Nobody Gets Rated On Surprise Standards

Before anyone writes a word of feedback, HR should ensure the performance plan is complete and visible in the system. This is where a lot of chaos starts: missing goals, outdated competencies, or a plan that was never formally acknowledged.

If your organization uses an online employee performance management tool, make sure the plan includes:

- role-aligned objectives (not vague intentions)

- core competencies tied to observable behavior

- any required weighting or rating scales

- mid-year updates or goal changes documented

A plan check may sound administrative, but it’s your first fairness filter. I always say this to managers: you can’t evaluate someone against standards they never had.

Step 2: Guide Employees Through A Self-Evaluation That Builds Ownership Instead Of Anxiety

The employee completes a self-assessment against goals and competencies, usually with commentary and a self-rating. Done well, this adds signal, not noise.

HR can help by offering clear employee self-evaluation steps and guardrails so self-evals don’t become either (a) a humble paragraph of nothing or (b) a 17-page victory manifesto.

A solid self-evaluation typically includes:

- outcomes tied to goals (what happened, with evidence)

- behaviors tied to competencies (how they worked)

- constraints (what got in the way, what they tried)

- learning (what they’d do differently next cycle)

This is also where you can encourage a step-by-step employee self-assessment structure:

| Goal → Actions → Results → Evidence → What I learned → What I want next. |

Step 3: Pull Multi-Source Evidence As A Manager So Your Rating Is About Reality, Not Mood

Now the manager reviews:

- the self-evaluation

- performance notes captured during the year

- outcomes and metrics (where available)

- feedback from others (when appropriate)

This is the moment where managers either do a real evaluation or they default to vibes. In my experience, HR’s job is to make the evidence path easier than the vibe path.

When you do this right, you’re combining multiple performance evaluation methods into one coherent evaluation: goal-based assessment, competency-based assessment, and multi-rater input.

Step 4: Add Secondary Review To Catch Inconsistency Early And Protect Fairness At Scale

This is the fairness checkpoint. A secondary review helps ensure the evaluation is:

- verifiable (supported by examples, not assumptions)

- equitable (consistent across managers)

- compliant (matches policy and required documentation)

This layer matters especially for edge cases: unusually high/low ratings, new managers, performance concerns, promotions, or reorganizations.

It’s also one of your best levers for catching rater bias in appraisals before it lands on an employee’s lap.

Step 5: Run A Strong 1:1 Review Conversation So The Document Turns Into Real Development

The evaluation meeting is where your process becomes human. It’s also where you find out whether the written review was a helpful summary or a surprise attack.

Good meetings cover:

- What went well (specific, repeatable behaviors)

- What needs to change (clear expectations, not personality critique)

- The overall rating and how it was determined

- future goals and development needs

This is not the moment to “read the form out loud.” The form should support a conversation, not replace it.

Step 6: Use Acknowledgment Correctly So Employees Understand Their Rights Without Derailing The Process

The employee signs or acknowledges the review in the system. In many organizations, acknowledgment is not agreement, and HR should say that plainly. People often panic here because they think clicking “acknowledge” means “I accept your verdict.”

You want employees to understand the process, their next steps, and how to respond if they disagree (without turning that response into a mini-trial).

Step 7: Close The Cycle Cleanly So Next Year’s Planning Starts With A Real Signal

The manager completes the document and closes the cycle. HR then does the behind-the-scenes work that makes next year easier: data audits, calibration learnings, training notes, and process updates.

This is where evaluation becomes a bridge, not a dead end.

Two-line reality check before we go further: Most review cycles don’t fail because HR doesn’t know the steps. They fail because feedback is weak, goals are fuzzy, and bias creeps in quietly, so let’s tighten the inputs.

Having said this, I do wish to inform you that teams rely on tools like PeopleGoal that has readymade performance evaluation templates in its App Store to ensure easier workflow.

Also, here is a quick video for you with an employee performance management system that actually works:

Bonus: A Simple Checklist for Managers

After a few cycles, HR learns the truth: managers don’t need more theory; they need a short checklist that prevents predictable mistakes. Here’s a manager performance review checklist you can give them:

- Did I review goals and expectations that were set at the start (and updated if they changed)?

- Do I have at least 3–5 concrete performance evaluation examples across the year (not just the last month)?

- Did I separate results (what) from behaviors (how)?

- Did I gather input from relevant stakeholders where appropriate?

- Can I explain the overall performance rating calculation in plain language?

- Did I check myself for common bias patterns (recency, halo/horn, central tendency)?

- Is my written feedback clear enough that the employee knows what to repeat and what to change?

That checklist doesn’t make the conversation easy, but it makes it fairer.

Next, we need to talk about the evidence ingredients that stop evaluations from drifting into “manager mood.”

That’s where 360-degree feedback and goal frameworks do much of the heavy lifting.

Strategic Feedback: 360-Degree Reviews & Goal Alignment

One reason evaluations get contentious is simple: managers rarely see the full picture. Employees collaborate cross-functionally, support customers, unblock peers, and quietly fix problems that never make it into a dashboard.

That’s why multi-source input is one of the most practical performance evaluation methods when it’s designed responsibly.

Design 360 Reviews To Add Clarity And Credibility Instead Of Noise

Used well, 360 feedback adds dimension. Used badly, it becomes a popularity contest with better formatting.

Here are 360-degree feedback best practices that keep it useful:

Anonymity where it matters. Peer and subordinate feedback should typically be anonymous to encourage honesty and reduce fear of retaliation. (Managers can still summarize themes without naming names.)

Rater selection with guardrails. Pure manager-only selection can create cherry-picking. Pure employee-only selection can create “friend lists.” A balanced approach is common: the employee suggests raters, the manager approves, HR sets minimums and diversity rules (cross-functional, project-based, internal/external where applicable).

Prompt for behaviors, not adjectives. If your questions invite “Rate Alex’s leadership,” you’ll get vibes. If you ask, “Describe a time Alex influenced a decision without authority,” you’ll get evidence.

Separate development input from rating input when needed. Some organizations use 360 feedback primarily for development to avoid turning it into a political weapon. Others use it as one input to the final rating. Either can work; what matters is clarity.

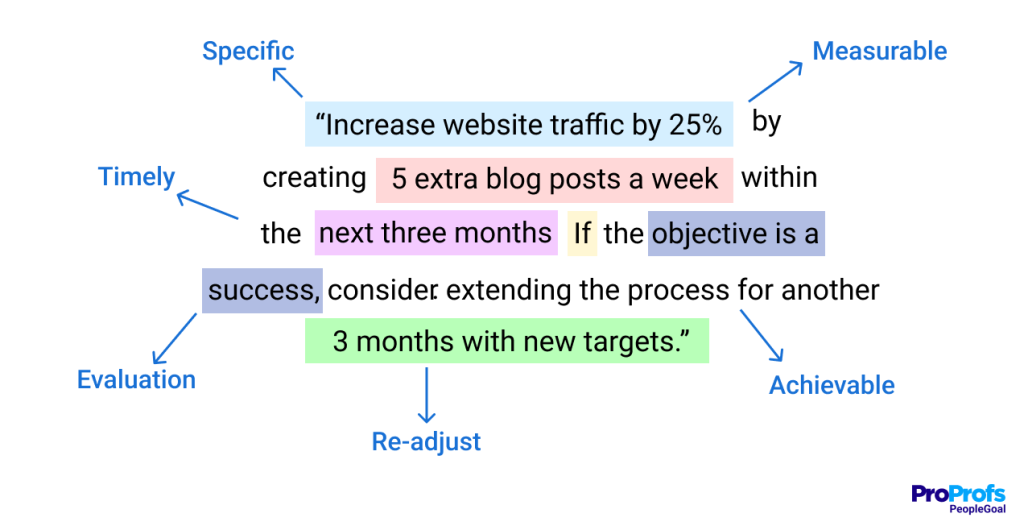

Anchor Ratings In OKRs And SMART Goals So You Can Defend Decisions With Evidence

The fastest way to reduce rating debates is to anchor performance to goals that were measurable from the start. That’s why Objectives & Key Results and SMART goals show up in so many modern frameworks.

- OKRs help you evaluate progress toward objectives and key results over time, which is useful for minimizing “latest event wins.”

SMART criteria: Specific, Measurable, Ambitious, Relevant, Time-bound. These help ensure goals are evaluable and not vague.

This is where the phrase SMART rating criteria fits in practice: you’re not just setting SMART goals; you’re evaluating against criteria that make the rating defensible.

A Quick Example: Goal Quality Changes Everything

Vague goal: “Improve customer satisfaction.”

SMART goal: “Increase CSAT from 4.2 to 4.5 by Q4 by reducing first-response time to under 2 hours and launching a weekly escalation review.”

In the vague version, the evaluation becomes interpretive. In the SMART version, the review becomes evidence-based.

Explain The Overall Rating In Plain Language So Employees Don’t Feel It’s Arbitrary

Even if your rating model is complex (weights, competencies, goals, behavioral anchors), managers should be able to explain the overall performance rating calculation in plain language.

Here’s an example explanation that doesn’t sound like a robot:

“Your results against the three weighted goals put you at ‘Exceeds’ for outcomes. On competencies, you were strong on collaboration and execution, but the feedback consistently noted that you could communicate risk earlier. That’s why the overall rating is ‘Strong’ rather than ‘Top’, it reflects both what you achieved and how consistently you delivered it.”

That kind of explanation reduces defensiveness because it doesn’t feel arbitrary.

Now for the part everyone knows exists, but few processes handle well: bias. Because even with OKRs and 360s, managers are still human, and humans are, politely, messy.

How to Identify & Mitigate Rater Bias in Your Performance Evaluation Process

Bias isn’t always malicious. Most of the time, it’s cognitive shortcuts: the brain trying to simplify a complex year into a tidy story. But those shortcuts can distort ratings, damage trust, and expose the organization to risk.

Let’s name the usual suspects, then talk about what actually works.

1. Reduce Recency Bias By Using Evidence Across The Whole Year, Not Just The Final Month

Recency effect in reviews happens when a manager overweights recent events and underweights everything earlier. The classic scenario: an employee had nine strong months and one rough month in the last quarter, and suddenly the whole year gets reinterpreted as “inconsistent.”

What usually goes wrong: Managers rely on memory instead of documented evidence. Memory is biased toward what’s fresh, emotional, or high-visibility.

Practical fixes:

- Require managers to cite examples from at least 2–3 different quarters.

- Encourage quarterly check-ins that produce notes (not essays).

- Use goal frameworks like OKRs to track progress over time, not just at the end.

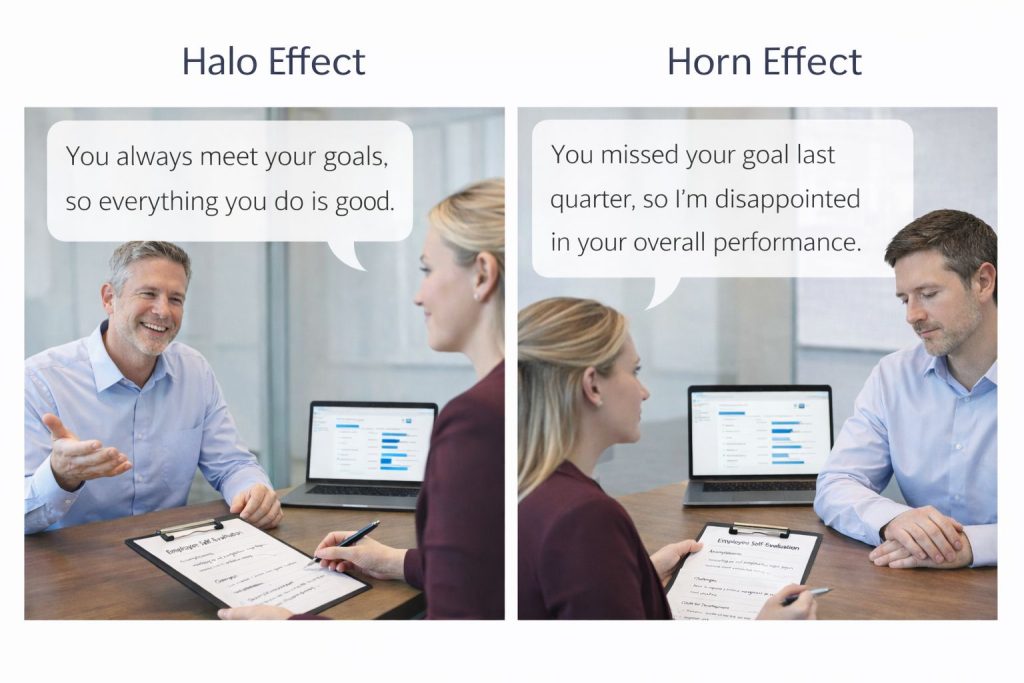

2. Stop Halo And Horn Errors By Separating Outcomes From Behaviors

Halo is when one strength (charisma, confidence, a single big win) makes everything else look better. Horn is the opposite: one mistake colors everything negatively.

If you’ve ever heard, “They’re brilliant, so they must be a great collaborator,” you’ve heard the halo effect. If you’ve heard, “They missed that deadline, so I don’t trust them,” you’ve heard horn.

This is the keyword moment, but it’s also a real HR priority: mitigating halo and horn effect in evaluations is one of the highest ROI manager training topics.

Practical fixes:

- Force separation: rate goals separately from competencies.

- Ask for counter-evidence: “What data would argue the opposite?”

- Use calibration sessions to surface outliers and overgeneralizations.

3. Prevent Spillover Bias By Forcing “This Year” Proof Instead Of Reputation Recycling

Spillover bias is judging current employee performance based on past ratings or reputation. Someone was once a top performer, so they keep getting top ratings, even when the current year is average. Or someone struggled early in tenure and never escapes the “maybe not great” label.

What usually goes wrong: Managers anchor to a previous narrative and stop updating it.

Practical fixes:

- Require “this year” evidence in every section.

- Use a structured template that asks: “What changed since last cycle?”

- Secondary review flags “same rating, same words” patterns.

4. Beat Central Tendency By Calibrating Standards So “Average” Doesn’t Become The Easy Button

Central tendency is the manager who rates everyone as “meets expectations” because it feels safer. No difficult conversations, no justification, no conflict.

But it’s not neutral. It’s demotivating. High performers feel ignored; low performers get a pass; the system loses credibility.

Practical fixes:

- Calibration: compare distributions across managers and teams.

- Coaching: train managers to write specific, behavior-based feedback.

- Accountability: require justification for ratings, not just for extreme ones.

5. Build Bias Mitigation Into The System So Fairness Doesn’t Depend On Manager Memory

Bias mitigation can’t be “a training once a year.” It needs a system design.

The most effective strategies tend to be:

- Structured evidence requirements: ratings must be tied to examples.

- Multi-source input: balanced perspectives reduce single-rater distortion.

- Secondary approvals: HR and reviewing managers catch patterns.

- Calibration sessions: align rating standards across the org.

- Manager enablement: templates, examples, and coaching nudges.

And yes, this is where “good process” quietly protects both employees and the organization.

Next, let’s deal with a modern reality: remote and hybrid work.

Because your evaluation process has to work even when nobody shares an office or context cues.

How to Execute Evaluations In Remote & Challenging Contexts

Remote work didn’t just change where people work; it changed how they work. It changed how performance is observed, how relationships form, and how feedback travels. If you don’t adapt, your evaluation process becomes a visibility contest.

Run Remote Reviews Over Video To Keep Trust High And Misunderstandings Low

If you’re remote, use video for the evaluation meeting whenever possible. Not because you need to police people’s faces, but because video restores enough human signal to keep the conversation empathetic and clear.

A few practical tips that improve the experience:

- Send the written review 24–48 hours ahead (when policy allows).

- Start by aligning on the agenda: outcomes, strengths, growth, rating, and next steps.

- Pause more than you think you need to; latency plus emotion is real.

- Use screen share for goals and examples so the discussion stays grounded.

This is one of those moments where a process detail becomes a trust detail.

Collect Remote Feedback More Deliberately So Quiet Contributions Don’t Disappear

In-office managers pick up context cues: who people collaborate with, how they show up in meetings, and who gets pulled into urgent issues. Remote managers lose those cues unless they intentionally collect them.

That means you need deliberate stakeholder input, especially for cross-functional roles. Instead of “Any feedback on Jamie?”, prompt raters with specific dimensions:

- “What outcomes did you see?”

- “How did Jamie communicate risk or progress?”

- “How did they handle conflict or ambiguity?”

- “What would you want them to do more or less of next cycle?”

This also reduces bias because it pushes raters toward behavior and evidence.

Evaluate With Context In Hard Seasons So You Stay Fair Without Losing Standards

In genuinely challenging contexts (major reorganizations, layoffs, economic volatility, health crises), performance evaluation needs an extra layer of judgment. Not “lower the bar,” but “evaluate with context.”

Managers should be trained to distinguish:

- capability vs. capacity

- effort vs. impact

- temporary disruption vs. persistent pattern

Sometimes the right move is temporarily shifting emphasis toward resilience, learning, and adaptability, especially when goals were invalidated by external events.

Two lines to connect the dots: remote work makes visibility uneven, and hard seasons make outcomes messy. That’s exactly why you need clear tiers and consistent response plans, especially for high performers and struggling employees.

Here’s a quick video to help you understand the performance management challenges and how to solve them effectively:

How to Tailor Performance Feedback for Different Performance Tiers

Not every evaluation conversation is the same conversation. A high performer needs challenge and trajectory. A struggling performer needs clarity, support, and consequences that aren’t disguised.

1. Keep High Performers Engaged With Forward-Looking Feedback

High performers don’t leave because you didn’t say “good job.” They leave because growth stalls, recognition feels random, or their work stops mattering.

Your evaluation for a high performer should be forward-looking and skill-based:

- What strengths are becoming “signature” strengths?

- What scope expansion makes sense next?

- What leadership behaviors are ready now, and what needs development?

- What strategic exposure would accelerate them?

This is where strong performance evaluation phrases can help managers communicate clearly without slipping into vague praise.

Examples (that actually say something):

- “Your project execution is reliable under ambiguity; the next step is influencing cross-team prioritization earlier.”

- “You consistently raise the quality bar; I want you to mentor two newer team members on your review process.”

- “Your stakeholder management is a strength; let’s stretch it by having you lead the Q2 roadmap alignment.”

Those phrases point to repeatable behaviors and next-level expectations.

2. Help Solid Performers Grow Faster By Turning “Meets” Into Specific Next Skills

Most employees are in the middle, not because they’re mediocre, but because excellence is situational and distributed. For this tier, your job is to make “meets” feel like progress, not a ceiling.

Strong evaluations here focus on:

- reinforcing what’s working

- naming one or two growth areas that matter

- setting goals that are stretching but realistic

- connecting work to broader business outcomes

A common failure mode is giving generic feedback like “be more proactive.” If you want proactive, define it:

“By proactive, I mean raising risks earlier, proposing options, and documenting next steps after stakeholder meetings.”

That turns a personality critique into a behavior target.

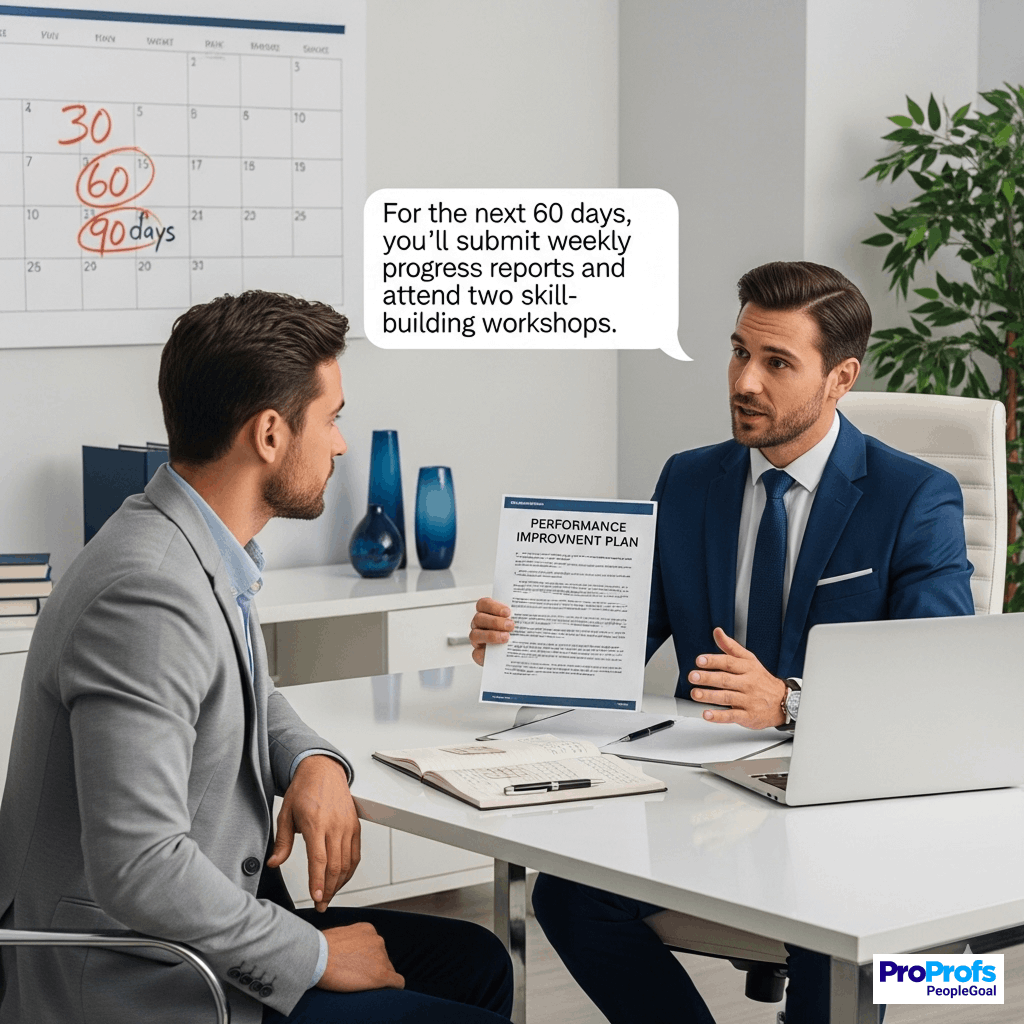

3. Address Poor Performance With Support First, Then Use A PIP

If the evaluation shows persistent gaps, HR should guide a structured response. Sometimes it’s coaching. Sometimes it’s a role fit. Sometimes it’s a formal performance improvement plan (PIP).

A PIP shouldn’t be used as a surprise punishment. It should be the formal step after clear feedback, and support hasn’t changed outcomes.

PIP requirements (practically speaking):

- a written summary of issues (observable, not judgmental)

- specific expectations tied to role standards

- SMART goals and milestones

- support offered (training, mentoring, resources)

- a timeline (often 30/60/90 days)

- consequences if expectations aren’t met

Here are two quick performance evaluation examples that show the difference between weak and strong documentation:

Weak: “Not a team player. Needs better attitude.”

Strong: “In three cross-functional meetings (Oct 3, Nov 12, Dec 7), Alex interrupted stakeholders and dismissed concerns without proposing alternatives. Expectation: demonstrate collaborative disagreement by summarizing the concern, offering one alternative option, and confirming next steps.”

That second one is uncomfortable, but it’s fair, actionable, and defensible.

PIP Goal Examples (SMART, Not Vibes)

| Performance Area | Not Helpful | PIP-Ready SMART Goal |

|---|---|---|

| Timeliness | “Meet deadlines” | “Deliver weekly status report by 3 pm Friday for 8 consecutive weeks with risks flagged 48 hours in advance.” |

| Quality | “Do better work.” | “Reduce returned defects from 12/week to ≤3/week by following the QA checklist and peer review before submission.” |

| Communication | “Be more proactive.” | “Send stakeholder updates every Tuesday with progress, blockers, and next steps; no more than 1 escalation due to lack of updates in 60 days.” |

Notice what’s happening here: no mind-reading required. The employee knows exactly what success looks like.

Now let’s zoom out. If your evaluation process is built for 2016, it will feel clunky in 2026.

So let’s talk trends that are already reshaping how HR runs evaluation cycles.

Future Trends: Performance Evaluation

The future isn’t “no performance reviews.” It’s better inputs, lighter admin burden, and more real-time coaching, without losing fairness.

1. AI Can Summarize Feedback & Coach Managers, Without Letting It Decide The Rating

AI is increasingly used to:

- Summarize multi-source feedback into themes

- Identify strengths and recurring development areas

- Nudge managers toward better wording and more evidence

- Flag potential bias signals (like consistently vague feedback for certain groups)

The best use case is not “AI writes the review for you.” It’s “AI helps you organize what you already know” and prompts better thinking.

If you adopt AI support, HR should set guardrails:

- Managers remain accountable for accuracy and decisions

- Employees can see evidence, not just summaries

- Sensitive data handling is explicit

- The system avoids turning sentiment into “truth.”

2. Shift Toward Dynamic Performance Management So Year-End Reviews Stop Feeling Like Forensics

Dynamic Performance Management (DPM) is the idea that performance is shaped continuously, so management should be, too.

Instead of relying on a year-end memory dump, DPM uses:

- regular check-ins

- lightweight progress updates

- project retrospectives

- continuous feedback loops

- real-time coaching prompts

Evaluation still exists, but it’s a summary of a well-documented year, not a forensic reconstruction.

3. Tracking Skills Progress So Evaluations Support Mobility, Not Just A Score At Year-End

As roles evolve faster, “Did you hit the goal?” isn’t enough. Organizations are increasingly tracking:

- skill proficiency (current level)

- skill growth (progress over time)

- skill application (where it showed up in work)

This matters for internal mobility, workforce planning, and fairer growth conversations. It also helps employees see a path forward that isn’t only “get promoted.”

Two lines before we land this plane: trends don’t replace fundamentals, they amplify them.

If your evidence and bias controls are weak, new tech just helps you be wrong faster.

Ready to Take the Next Step in the Performance Evaluation Process?

A strong evaluation phase isn’t about perfect ratings. It’s about creating a fair, evidence-based summary that people can learn from. When the performance evaluation process is clear, the conversation becomes less defensive and more developmental. When your performance evaluation procedure includes multi-source input, structured goals, and bias controls, you get ratings people trust, even when they don’t love them.

Most importantly: the end of the fiscal year review isn’t the finish line. It’s the handoff. Once you close the evaluation, you should use those insights to plan the next cycle: new goals, a new growth focus, and fewer surprises.

And if you’re supporting managers through all of this, a platform like PeopleGoal can be a helpful home base for keeping goals, feedback, and review documentation organized, without turning the process into a paperwork marathon.

Frequently Asked Questions

Should We Still Use Numerical Ratings In [current_year]?

There’s a growing push toward richer narrative assessments because they’re more actionable. Some organizations also suspend or soften numerical ratings in unusually disruptive periods to emphasize support and resilience. If you keep ratings, make sure the narrative is strong enough that the number isn’t doing all the meaning-making.

What Is The Best Way To Handle Poor Performers During A Review?

Don’t “perform the punishment.” Start with curiosity: what’s causing the gap, skill, clarity, support, capacity, or something else? If issues persist, move to a formal performance improvement plan (PIP) with clear goals, timelines, and consequences.

How Does 360-Degree Feedback Impact The Overall Rating?

360 input should inform the manager’s view, but the rating of record is assigned to an individual based on role expectations and outcomes. Peer input improves fairness by adding perspectives, especially in cross-functional work, but shouldn’t become a vote.

Ready to 3x Your Teams' Performance?

Use the best performance management software to align goals, track progress, and boost employee engagement.