I’ve often seen evaluation guides talk about things like “creating clarity” or “driving forward motion.” This isn’t one of those guides.

Instead, I want to start with what actually brings companies to us in the first place. A CFO who has never run a structured review cycle. An HR manager chasing performance review PDFs across endless email threads. A consultant manually assigning evaluators for 75 employees in a spreadsheet.

These aren’t rare situations. They’re surprisingly common.

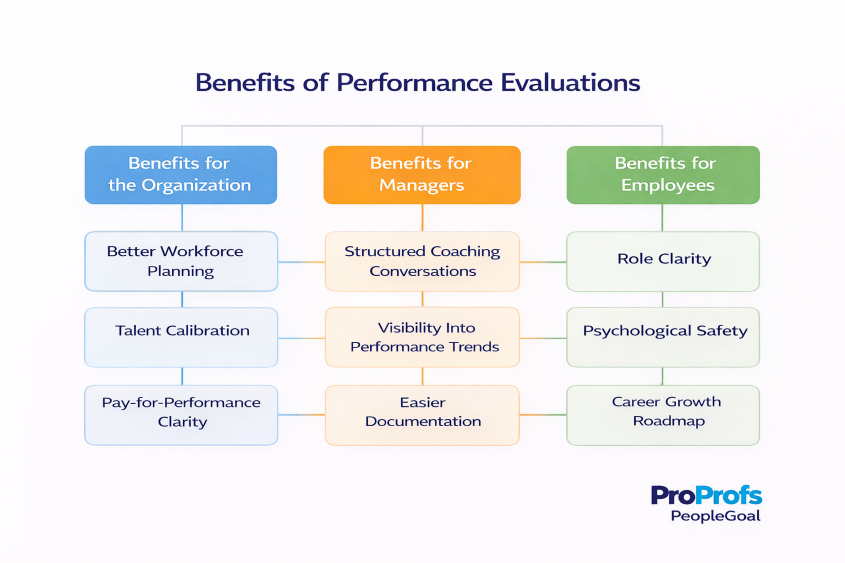

So instead of focusing on theory, I want to focus on the real problems teams deal with every day and the benefits of employee performance evaluation when the process reflects actual work, not just a form to fill out.

In the sections ahead, I’ll walk through the core benefits of performance evaluations when they are designed to support how teams truly operate.

What Are the Three Benefits of Performance Evaluations?

If you’re here to understand what structured performance evaluations actually deliver, or to make the case internally for improving your current process, start here.

These are the three outcomes that show up consistently across organizations that get evaluations right.

1. Team Productivity Increases When Expectations Are Explicit

The connection between evaluations and team output is frequently underestimated. When employees know exactly what they’re being measured on, they prioritize differently. When managers document goals at the start of a cycle and review them at the midpoint, course corrections happen in weeks rather than quarters.

| Sandvine saw this directly. Before structured evaluations, linking individual goals to department and company objectives was inconsistent and manually tracked. After implementing SMART goal-based evaluations, they achieved a 5x increase in employees actively setting and tracking goals, with over 2,500 individual goals set annually. Productivity didn’t improve because people worked harder. It improved because they were consistently working on the right things. |

What this means for your team:

- Goals set at the start of a cycle give employees a clear target to optimize toward

- Mid-cycle check-ins catch misalignment before it becomes a performance problem

- Documented expectations reduce the “I didn’t know that was a priority” conversations

2. Decision Quality Improves Across Promotions, Compensation, and Exits

The decisions carrying the most organizational risk, who gets promoted, who gets a raise, when to address underperformance, are most often made without adequate documentation. That’s not a leadership failure. It’s a systems failure.

When evaluation data is structured and consistent, decision quality improves across the board:

| Decision Type | Without Structured Evaluations | With Structured Evaluations |

|---|---|---|

| Promotions | Based on visibility and recent performance | Based on documented history across full cycle |

| Compensation | Feels arbitrary, creates resentment | Tied to clear performance criteria |

| Underperformance | Addressed late, high stakes for everyone | Caught early, coaching-led |

| Exits | Poorly documented, legal exposure | Supported by clear performance record |

What this means for your leadership team:

- Promotion decisions become explainable before they’re questioned

- Compensation conversations shift from negotiation to criteria-based discussion

- Underperformance is addressed at month three instead of month nine

3. Individual Development Becomes Trackable, Not Theoretical

Most organizations have development conversations. Far fewer have development outcomes. The gap between the two is almost always documentation.

| Leica Geosystems is a clear example of what changes when decision quality improves at scale. With 20,000 employees across global markets, their performance data was held in disparate systems, making benchmarking and reporting virtually impossible. HR Director Kevin Vaz described it as taking “stabs in the dark.” After centralizing evaluations on one platform, Leica gained complete global visibility into performance. Their Asia market revenue more than doubled in two years following the implementation. |

What this means for your employees:

- Feedback tied to specific behaviors gives people something concrete to act on

- Regular touchpoints mean development gaps are addressed before they affect performance ratings

- Career growth conversations happen throughout the year, not just during review season

The direct answer to the question most readers came here with:

- Will structured evaluations improve team performance? Yes, when goals are explicit and progress is tracked at regular intervals.

- Will they help with promotion decisions? Yes, when you have documented history rather than recent impressions driving the call.

- Will they reduce friction with underperformers? Yes, when issues are identified and addressed at month three instead of month nine.

Why Most Organizations Actually Need Structured Evaluations

Companies don’t come looking for a performance evaluation system because they read a best-practice blog. Something usually breaks first. Here are the triggers I see most often:

- Promotions felt political: When advancement isn’t tied to documented performance, visibility wins over contribution. Once that perception sets in, it’s hard to undo.

- A manager left and took all context with them: No evaluation history means no record of goals, feedback, or progress. The incoming manager starts from zero.

- The team scaled past what informal feedback can handle: What works for 15 people collapses at 50. Memory-based feedback doesn’t survive growth.

- Compensation decisions got contentious: When raises feel arbitrary, employees compare notes. That conversation, happening quietly across your organization, is one you don’t want.

- Admin consumed the HR calendar: Spreadsheet-based performance cycles become so time-consuming that HR spends more effort collecting forms than actually developing people.

How Do Effective Performance Evaluations Improve Results?

Most guides stop at “evaluations create clarity.” That’s not enough. Here’s what specifically shifts across four areas when reviews are structured properly.

1. Promotions become defensible

| Before | After |

|---|---|

| Manager explains decision after the fact | Decision is visible before it's made |

| Advancement tied to visibility | Advancement tied to documented history |

| "Who you know" perception | Merit-based record anyone can see |

When evaluations are consistent, the promotion conversation changes entirely. You’re not making a case, you’re pointing to 18 months of documented performance. That removes the favoritism perception even when the decision is genuinely merit-based.

2. Managers stop evaluating from memory

Most managers, without realizing it, evaluate based on the last 60 days. Someone has a strong Q4 and suddenly their whole year looks good. Someone stumbles in October and a strong Q2 gets forgotten. This isn’t malicious bias. It’s just how cognition works under time pressure.

What structured evaluations change:

- Monthly check-ins or milestone reviews create a running record

- Formal reviews become summaries of documented evidence, not reconstructions

- Manager feedback shifts from “I think you had a good year” to a quarter-by-quarter account

3. Underperformance gets caught earlier

Here’s an uncomfortable truth: most performance problems are visible months before they’re addressed. A manager notices something in March, hopes it improves, says nothing. By December, nine months have passed without course correction.

The difference this makes:

- Issue caught in month 3 → coaching conversation, time to turn it around

- Issue addressed in month 9 → formal process, limited runway, higher stakes for everyone

Mid-year checkpoints create natural moments to raise concerns early, not as warnings but as coaching. The language shifts from “here’s your performance problem” to “here’s what I’m seeing, here’s what I need, here’s how I’ll support you.”

4. Employees understand exactly how to grow

One of the most common things HR leaders tell us: “My team doesn’t know what good looks like.” That’s not a motivation problem. It’s a structural problem.

Vague feedback vs. structured feedback:

| Vague | Structured |

|---|---|

| "Be more proactive" | "In the last two projects, decisions were escalated to me that you had the authority to make. Here are three examples." |

| "Improve your communication" | "In cross-team meetings, context wasn't shared ahead of time, which caused two re-runs. Here's what I'd expect instead." |

| "Take more ownership" | "When the client escalated in Q3, the issue sat for four days. Here's the response pattern I'd want to see." |

Development plans built on specific feedback get executed. Vague ones get filed and forgotten.

One thing that makes this harder than it should be: a single evaluation template applied across every role. Dialogue ran into this directly. Their engineering, sales, and operations teams were being assessed against identical criteria, which made feedback generic by default.

Moving to role-specific templates with consistent company-wide standards significantly improved feedback quality because managers were finally asking questions that matched the actual work.

Which Employee Evaluation Approach to Use

Most guides list five evaluation models and then conclude with “it depends.” That rarely helps anyone make a decision.

A better way to think about it is to start with your situation. What stage is your company in? How structured are your reviews today? And what outcomes are you trying to achieve?

When organizations begin to answer these questions, the benefits of employee performance evaluation become much clearer. The right evaluation approach helps teams move from scattered feedback and inconsistent reviews to a process that actually supports growth, accountability, and better decision-making.

Instead of comparing models in theory, the sections below will help you choose an approach that fits the way your organization actually works.

- Fewer than 50 people, no dedicated HR function: Start with annual reviews plus quarterly check-ins. Three to five goals per person, documented manager feedback, a brief self-assessment. Consistency matters more than sophistication at this stage.

- Replacing a manual process (PDFs, spreadsheets, email chains): Solve the admin problem before choosing a model. Map what actually needs to happen, who reviews whom, how ratings are calibrated, and where results go. Then find a system that fits the process, not the other way around.

- Managers’ ratings are inconsistent across teams: This is a calibration problem, not a model problem. Define what each rating level means in behavioral terms. Run a calibration session before reviews finalize, where managers compare ratings and justify outliers.

- Project-based work that doesn’t fit calendar cycles: Tie evaluations to project milestones instead. A consultant or campaign manager can be meaningfully reviewed at the close of a major initiative. The question shifts from “what did you do this year” to “what did you deliver, how did you work, what did the team learn.”

- Needing feedback from multiple directions: 360-degree reviews work well for leadership roles and situations where the manager doesn’t have full visibility. They fail when used as a substitute for manager accountability. Use them to add perspective, not to avoid difficult conversations.

- Managing probation periods or new hires: Build a separate, shorter review cycle for these moments. A 45-day check-in is specifically about alignment, not performance scoring. Forcing it into the standard framework creates confusion for everyone.

- Scaling rapidly with new hires joining regularly: Connect evaluations explicitly to company goals. When individual performance is visibly tied to OKRs, new hires understand what good looks like from day one rather than figuring it out six months in.

Why Performance Evaluations Fail (And What to Do Instead)

The failure modes are worth naming directly because most organizations are experiencing at least one of them right now.

1. Recency bias: The last two months dominate the review regardless of what happened before.

Fix: Require documented check-ins throughout the year so reviews reflect a full record.

2. Ratings without evidence: A manager gives a 4 out of 5 with no specific examples. The employee doesn’t know what earned that rating or what would change it.

Fix: Make example-based justification a required field, not an optional one.

3. Feedback appearing for the first time in a formal review: An employee hears critical feedback they’ve never received before. Trust collapses immediately.

Fix: Establish a no-surprises rule. Nothing in the formal review should be new information.

4. Reviews that lead nowhere: The form gets completed, ratings get filed, and within two weeks everyone has moved on. No development plan, no follow-up, no adjustment.

Fix: Every performance review produces at least one documented next action, whether a development goal, training investment, or role adjustment.

5. Calibration that never happens: Different managers apply different standards. One team’s “meets expectations” is another team’s “exceeds.” This surfaces painfully during compensation season.

Fix: Run a calibration session before reviews are finalized. Managers compare ratings together and flag inconsistencies before employees see results.

6. One template applied to every role: Generic questions produce generic answers. When an engineering team and a sales team answer identical criteria, feedback becomes meaningless for both.

Fix: Build role-specific templates that share a consistent rating standard but ask questions relevant to each function.

What a Functional Evaluation Process Looks Like

You won’t reap the benefits if you just sit after understanding why performance evaluation is important isn’t enough. Here’s what needs to happen operationally, without the inspiration.

Before the cycle opens:

- Define what each rating level means in concrete behavioral terms

- Communicate the timeline and expectations clearly to both managers and employees

- Train managers on the calibration standard for this specific cycle

During the cycle:

- Employees complete self-assessments first, as discussion inputs, not performance records

- Managers write performance reviews with specific examples supporting each rating

- Calibration review happens before anything is finalized or shared

In the review conversation:

- Manager leads with context: “Here’s what I observed, here’s how I see your contributions.”

- Employee responds, and development priorities are agreed on together

- No rating should be a surprise at this point

After the review closes:

- Document development plans clearly, assign ownership, and schedule follow-ups so progress stays visible.

- Communicate compensation decisions with clear links to defined performance criteria.

- Schedule follow-up check-ins in advance so development plans stay active instead of quietly fading away.

The whole cycle should feel like a summary of an ongoing conversation, not an annual event everyone survives and forgets.

What This Looks Like at Different Scales

The right evaluation process depends on where you are, not just what you’re trying to achieve.

- 20-person team running the first formal cycle: Focus on building the habit first. Set simple goals, collect documented employee feedback, and run one mid-year check-in. Consistency matters more than perfection at this stage.

- 150-person organization moving off spreadsheets: Focus on removing friction. Managers should not spend hours chasing review completions. When the system handles reminders, tracking, and logistics, managers can spend their time where it matters most: coaching and meaningful conversations with their teams.

- Complex multi-cycle structure with compensation tied to performance: Prioritize visibility. Leadership needs aggregated performance data across teams without relying on managers to manually compile it each cycle.

A sophisticated system that managers route around is worse than a simple one they actually use.

Make Your Evaluation Process Work for You

When you design performance evaluations around how work actually happens, they stop feeling like administrative tasks and start working as decision tools. Teams know what they are working toward, managers have evidence instead of memory when making calls, and development conversations become ongoing rather than annual events.

The biggest shift is not the form or the rating scale. It is the presence of documented expectations, regular check-ins, and shared standards that make performance discussions clearer and fairer for everyone involved.

If your current process still relies on spreadsheets, scattered forms, or end-of-year recollections, it may be time to rethink the structure behind it. Platforms like PeopleGoal help teams run structured evaluations, track goals, and gather feedback in one place without adding more manual work.

Frequently Asked Questions

What are the most common performance evaluation criteria for employees?

The most common criteria include quality of work, goal achievement, communication, teamwork, problem-solving, reliability, and role-specific skills. Many companies also assess behaviors like ownership and adaptability.

How do you write meaningful performance evaluation comments?

Meaningful performance evaluation comments are specific and example-based. Instead of saying “good job,” mention what the employee did well, the impact it had, and what they can build on next. Clear, constructive language works best.

How do performance evaluations support employee development plans?

Evaluations highlight skill gaps, strengths, and growth opportunities. They help managers create development plans with clear actions, such as training, mentoring, stretch projects, or new responsibilities.

How can small businesses run effective evaluations without a large HR team?

Small businesses can keep evaluations simple by using short goal-based reviews, self-assessments, and quarterly check-ins. Consistency matters more than complexity, even with a lean team.

What’s the difference between KPIs and competencies in performance reviews?

Performance KPIs measure outcomes, like sales numbers or project delivery. Competencies measure how someone works, like leadership, collaboration, or communication. Strong reviews usually look at both.

What role does employee self-assessment play in performance evaluation?

Self-assessments give employees a voice in the process. They help surface accomplishments, challenges, and goals that managers may not fully see, making evaluations more balanced and collaborative.

How do you measure performance fairly for different roles and departments?

Fair measurement comes from role-specific goals and consistent criteria. Sales roles may focus on revenue, while support teams may focus on response quality. The key is aligning metrics with each job’s real impact.

What happens after a performance evaluation is completed?

After an evaluation, the next step should be action. That includes development plans, goal updates, coaching conversations, recognition, or promotion decisions. A review should lead to progress, not just documentation.

How can technology improve the evaluation process without making it feel impersonal?

Technology can automate reminders, organize feedback, and track goals, which reduces admin work. The process still feels human when leaders use the tools to support better conversations, not replace them.

Ready to 3x Your Teams' Performance?

Use the best performance management software to align goals, track progress, and boost employee engagement.